- Biodesign Academy

- Posts

- Bit-Perfect, Materially Broken: Trustworthy Biodesign Challenges in DNA Data Storage

Bit-Perfect, Materially Broken: Trustworthy Biodesign Challenges in DNA Data Storage

Bridging the gap between digital recovery and molecular integrity.

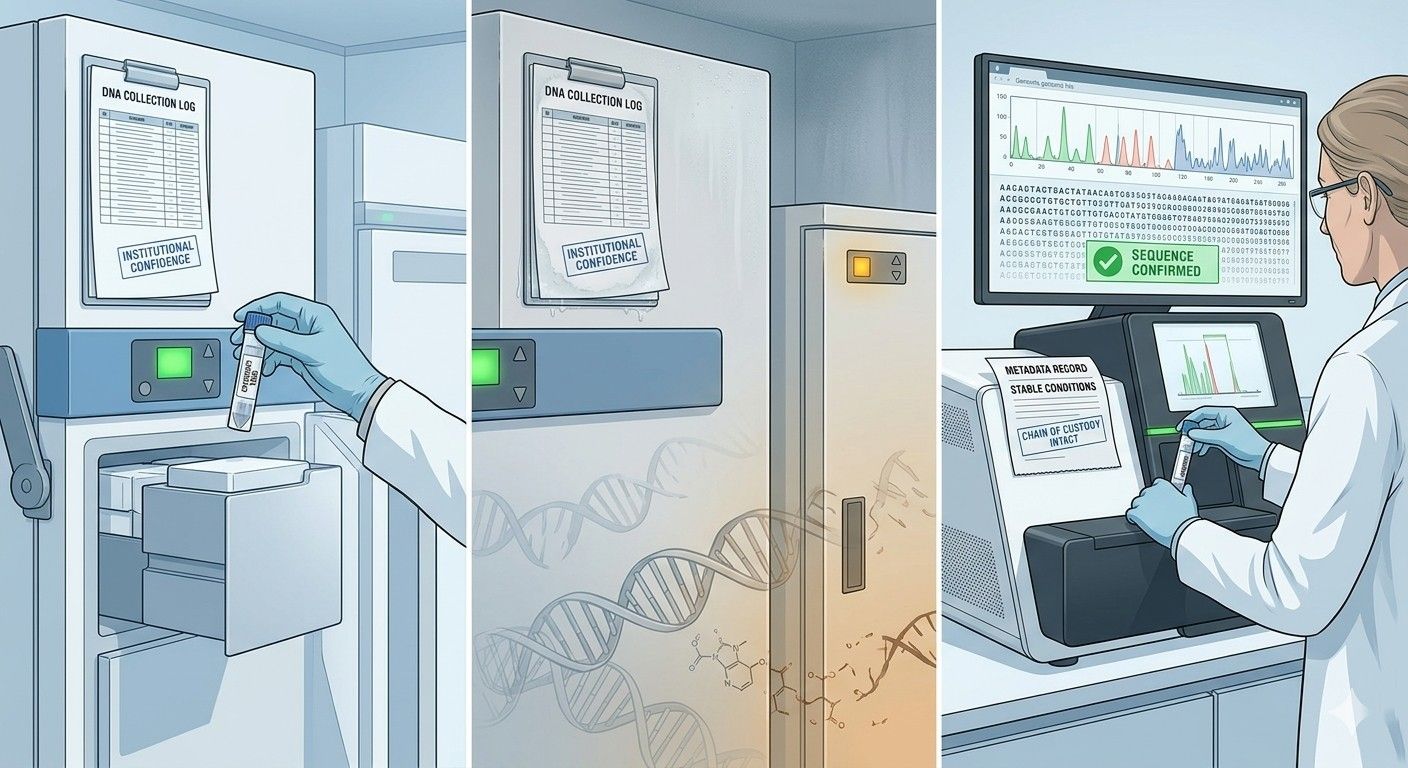

Somewhere in a laboratory freezer, a small tube holds a solution of synthetic DNA molecules. Encoded within their base sequences is a digital file, perhaps a medical record, a legal document, or a cultural heritage archive. To retrieve it, a researcher runs the sample through a sequencing machine, translates the molecular output back into binary, and reconstructs the original file. The process works. The data comes back intact. The system is considered to have succeeded.

But success at retrieval is not the same as being able to trust what was retrieved. That distinction is easy to overlook, and the DNA storage field has largely set it aside. This post argues that it should not.

What DNA Data Storage Actually Is

Every digital file, whether a photograph, a spreadsheet, or a genome sequence, is ultimately a string of ones and zeros. DNA, the molecule that encodes biological information in living cells, is also a string of information, written in a four-letter alphabet: adenine, thymine, cytosine, and guanine, abbreviated A, T, C, and G.

Binary data can be translated into sequences of DNA bases, synthesised as physical molecules in a laboratory, and stored until needed. To read the data back, the molecules are sequenced, their base order is read by a machine, and the result is translated back into the original digital file.

In simple terms: a digital file, such as a photograph, is converted into DNA, stored physically, and later converted back into the same photograph. If the reconstructed file looks identical to the original, the system is assumed to have worked correctly.

This assumption is what needs to be questioned.

DNA Is Not Just Digital. It's Physical.

Here is what makes DNA storage different from a hard drive or a cloud server in a way that matters beyond engineering detail. DNA is not an abstract medium. It is a molecule, and molecules exist in the physical world. They age. They react to their environment. They accumulate damage.

Every stage of the DNA storage pipeline leaves traces in the material. During synthesis, the process of writing data into DNA, certain sequences are produced in uneven quantities, introducing bias across the pool of molecules from the very beginning. During amplification, the process of making enough copies of the molecules to work with, that bias can compound, with some sequences replicated more reliably than others depending on temperature, chemistry, and protocol. During storage, the molecules degrade through chemical reactions: water breaks bonds, oxygen causes oxidative damage, and the longer the storage period and the less controlled the conditions, the more pronounced these effects become. Sequencing itself introduces characteristic error patterns. Contamination from prior samples, reagents, or the environment can persist across steps.

None of this is unusual or avoidable. These are intrinsic properties of working with DNA as a physical medium. Researchers who work with ancient DNA recovered from archaeological sites understand this intimately. They can read the damage patterns in a molecule and estimate how long ago the organism died, what conditions it was stored in, and whether the sample has been contaminated with more recently introduced material. The molecule's physical state carries a record of its own history.

In a DNA storage system, the same is true. The physical state of a sample at the point of retrieval reflects everything that has happened to it since it was first synthesised. That history is written into the molecule. The question is whether anyone is reading it.

What Current Systems Are Designed to Do

DNA storage systems have become technically sophisticated, and it is worth being precise about what they do well before identifying what they miss.

The central challenge in storing data as DNA is that the molecular channel is noisy. Synthesis makes mistakes. Sequencing makes mistakes. Some strands are lost during handling. To compensate, the field has developed robust error-correcting codes, mathematical schemes borrowed from telecommunications that add controlled redundancy to the data so that it can be reconstructed even when a significant fraction of molecules are damaged, missing, or misread. These codes work well. Studies have demonstrated error-free retrieval of DNA-encoded data after exposure to conditions designed to simulate decades of degradation. The codes absorb the physical damage and deliver the payload regardless.

Sequencing pipelines also include quality control steps that filter out unreliable reads before they enter reconstruction. Laboratory information management systems, known as LIMS, document the handling and provenance of samples at each stage of the workflow, creating a record of the process. Biobanking standards such as ISO 20387 formalise these requirements, mandating chain-of-custody documentation and traceability as part of quality management. Together, these layers constitute a mature technical stack, each component doing its job well.

But they share a structural limitation. Error correction ensures the data survives. Quality control ensures the sequencing reads are usable. LIMS and standards ensure the process is recorded. None of them ask a deeper question: does the physical state of the DNA match what the records claim about it?

A Failure Mode That Currently Goes Undetected

To make this concrete, consider a specific scenario.

A DNA sample is synthesised, encoded with data, and placed into long-term storage. The laboratory records note the synthesis date, the storage conditions, and the chain of custody. Years later, the sample is retrieved. The sequencing run proceeds without incident. The error-correcting code reconstructs the data without errors. The system reports success.

But suppose that at some point during those years, the sample was exposed to elevated humidity, perhaps during a facility move, a storage unit malfunction, or a lapse in protocol. Hydrolytic damage accumulated in the molecules. Some strands degraded. The population of surviving molecules became skewed toward the more stable sequences, while compromised ones dropped out. The error-correcting code, designed precisely for this kind of situation, compensated for the dropout and delivered the payload regardless.

The metadata still records stable storage conditions. The sequencing output still produces the correct data. No alarm was raised, because no part of the system was designed to compare the molecular evidence, the damage patterns, the skewed distributions, the dropout signatures, against the provenance record. The inconsistency is undetectable within the pipeline.

This is not a catastrophic failure. The data came back. But as a structural feature of how these systems are built, it creates a category of problem that is systematically invisible: cases where the physical reality of a sample and the recorded account of its history have diverged, and where retrieval success masks rather than resolves that divergence.

Other Fields Have Already Noticed This Problem

The idea that molecular signals constitute evidence about a sample's history, and that this evidence should be compared against recorded claims, is not new. It is standard practice in adjacent domains.

Researchers working with ancient DNA use damage patterns as a primary tool for authenticating samples. The characteristic chemical modifications that accumulate in DNA over centuries are specific enough to distinguish genuine ancient material from more recently introduced contamination, and to estimate how much contamination is present. The molecule's physical condition is treated as direct evidence about its provenance. In clinical and forensic genomics, similar logic applies: sequence-derived signatures are used to detect sample swaps, identify cross-contamination, and flag mismatches between what a sample's records say and what the molecular evidence implies.

These fields have developed the interpretive infrastructure to reason from physical evidence to trust assessments. DNA storage has not. The relevant signals are present in standard sequencing output, including error distributions, fragment length profiles, coverage patterns, and copy-number statistics, but they are currently discarded or collapsed into summary metrics that feed error correction, rather than being examined for what they reveal about the sample's condition and history.

What Is Missing

The gap is not in the data being produced. It is in what is done with it.

What current DNA storage systems lack is a way of treating molecular signals as evidence, comparing that evidence against recorded metadata, and forming a structured view of whether they are consistent. This is a different task from decoding. Decoding asks what information a molecule contains. The missing layer would ask whether the physical condition of that molecule matches the claims made about its history.

One way to think about the outcome of such a comparison is as a molecular trust state: a representation of how well the observed molecular evidence aligns with what is recorded about the sample.

This is not a binary judgement. Molecular evidence is inherently probabilistic. Different histories can produce similar patterns. Any meaningful assessment must express degrees of consistency and uncertainty rather than a simple pass or fail.

Trust, in this context, is not something a system either has or does not have. It is something that must be evaluated, with an understanding of what the evidence can and cannot resolve.

Why This Matters

For now, DNA storage is largely confined to research settings where experienced human oversight compensates for what the pipeline cannot detect. Researchers notice anomalies, flag unusual sequencing behaviour, and maintain institutional knowledge about particular samples and their histories. The system's blind spot is covered by people working alongside it.

That compensation does not scale. As DNA storage moves toward large institutional archives, cross-organisational data exchange, and records with legal, medical, or scientific weight, the pipeline becomes the authoritative system. Metadata from years or decades earlier is taken at face value. Retrieval success is treated as confirmation of integrity. The informal checks disappear, and what remains is a system that is very good at recovering data and structurally unable to evaluate the conditions under which that data should be trusted.

This shift is reinforced by a broader trend. Data pipelines are increasingly embedded in software systems that operate with minimal human oversight, including AI-driven workflows that rely on automated retrieval and processing. In these contexts, successful decoding is often treated as sufficient evidence of correctness. But automated systems do not question inconsistencies unless they are explicitly designed to do so. A DNA-based record can be retrieved perfectly and still carry physical signatures that contradict its recorded history, and no part of the system will raise that discrepancy.

Decoding success is not the same as trust. Metadata is not the same as truth. Molecular signals already carry information about the history and condition of a sample, and they are simply not being read for that purpose. Building the infrastructure to read them is not a matter of collecting new data. It is a matter of deciding that the question is worth asking.

The DNA storage field has made remarkable progress on retrieval. The next problem, verification, is different in kind, and the sooner it is recognised as a distinct engineering and design challenge, the better positioned the field will be to address it before the stakes make the oversight costly.

This is a research direction, not a finished answer. The questions it opens up, how to represent molecular condition in a structured way, how to reason probabilistically about sample history, how to build the comparison layers that a genuine molecular trust state would require, are genuinely hard and genuinely open. But they are the right questions to be asking now, before the infrastructure that ignores them becomes too entrenched to change.

If that intersection interests you, between biological materials, information integrity, and the design of trustworthy systems, this is a space worth watching. The work is early, the problems are real, and the field needs people willing to take the physical seriously alongside the digital.

Follow along as this research develops. New pieces go out through the Biodesign Academy newsletter. Subscribe here if you want them in your inbox.